Three people co-founded Nvidia in 1993:[2]

- Jen-Hsun Huang (As of 2008[update] CEO), previously Director of CoreWare at LSI Logic and a microprocessor designer at Advanced Micro Devices (AMD)

- Chris Malachowsky, an electrical engineer who worked at Sun Microsystems

- Curtis Priem, previously a senior staff engineer and graphics chip designer at Sun Microsystems

The founders gained venture capital funding from Sequoia Capital.[3]

In 2000, Nvidia acquired the intellectual assets of its one-time rival 3dfx, one of the biggest graphics companies of the mid- to late-1990s.[4]

In July 2002 nVidia acquired Exluna for an undisclosed sum. Exluna made software rendering tools and the personnel were merged into the Cg project. [5]

In August 2003 nVidia acquired MediaQ for aprox 70 Million.[6]

On April 22, 2004 nVidia acquired iReady, a provider of high performance TCP/IP and iSCSI offload solutions.[7]

On December 14, 2005, Nvidia acquired ULI Electronics, which at the time supplied third-party southbridge parts for chipsets to ATI, Nvidia's competitor.[8]

In March 2006, Nvidia acquired Hybrid Graphics[9]

In December 2006, Nvidia, along with its main rival in the graphics industry AMD (which had acquired ATI), received subpoenas from the U.S. Department of Justice regarding possible antitrust violations in the graphics card industry.[10]

Forbes magazine named Nvidia its Company of the Year for 2007, citing the accomplishments it made during the said period as well as during the previous 5 years.[11]

On January 5, 2007, Nvidia announced that it had completed the acquisition of PortalPlayer, Inc.[12]

In February 2008, Nvidia acquired Ageia Technologies for an undisclosed sum. "The purchase reflects both companies' shared goal of creating the most amazing and captivating game experiences," said Jen-Hsun Huang, president and CEO of Nvidia. "By combining the teams that created the world's most pervasive GPU and physics engine brands, we can now bring GeForce-accelerated PhysX to twelve million gamers around the world."[13] (The press-release made no mention of the acquisition-cost nor of future plans for specific products.)

[edit] Branding

The company's name combines an initial n (a letter usable as a pronumeral in mathematical statements) and the root of video (from Latin videre, "to see"), thus implying "the best visual experience"[citation needed] or perhaps "immeasurable display."[original research?] The sound of the name Nvidia suggests "envy" (Spanish: eNVIDIA; Latin, Italian, or Romanian: iNVIDIA); and NVIDIA's GeForce 8 series product (manufactured 2006-2008) used the slogan "Green with envy."

The company name is officially entirely in upper-case ("NVIDIA"), and appears as such in the company's technical documentation, website and press releases.[14] The mixed-case form ("nVIDIA," with a full-height, lower-case "n") appears only in the corporate logo; though this form is apparent in other NVIDIA trademarks ("nDemand", "nView", "nZone").

[edit] Products

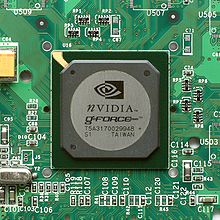

Nvidia's product portfolio includes graphics processors, wireless communications processors, PC platform (motherboard core logic) chipsets, and digital media player software. The community of computer users arguably has come to know Nvidia best for its "GeForce" product line, which consists of both a complete line of discrete graphics chips found in AIB (add-in board) video cards and core graphics technology used in nForce motherboards, the Microsoft Xbox game console, and Sony's PlayStation 3 game console.

In many respects Nvidia resembles its competitor ATI. Both companies began with a focus on the PC market and later expanded their activities into chips for non-PC applications. As part of their operations, both ATI and Nvidia create reference designs (circuit board schematics) and provide manufacturing samples to their board partners. However, unlike ATI, Nvidia does not sell graphics boards into the retail market, instead focusing on the development of GPU chips. As a fabless semiconductor company, Nvidia contracts out the manufacture of their chips to Taiwan Semiconductor Manufacturing Company, Ltd. (TSMC). Manufacturers of Nvidia video cards include EVGA, Foxconn, and PNY. The manufacturers ASUS, ECS, Gigabyte Technology, MSI, Palit, and XFX produce both ATI and NVIDIA cards.

December 2004 saw the announcement that Nvidia would assist Sony with the design of the graphics processor (RSX) in the PlayStation 3 game console. In March 2006 it emerged that Nvidia would deliver RSX to Sony as an IP core, and that Sony alone would organize the manufacture of the RSX. Under the agreement, Nvidia will provide ongoing support to port the RSX to Sony's fabs of choice (Sony and Toshiba), as well as die shrinks to 65 nm. This practice contrasts with Nvidia's business arrangement with Microsoft, in which Nvidia managed production and delivery of the Xbox GPU through NVIDIA's usual third-party foundry contracts. Meanwhile, Microsoft chose[when?] to license a design by ATI and to make its own manufacturing arrangements for the Xbox 360 graphics hardware, as has Nintendo for the Wii console (which succeeds the ATI-based Nintendo GameCube).

On February 4, 2008, Nvidia announced plans to acquire physics-software producer Ageia, whose PhysX physics engine program formed part of hundreds of games shipping or in development for PlayStation 3, Xbox 360, Wii, and gaming PCs.[15] This transaction completed on February 13, 2008[16] and efforts to integrate PhysX into the GeForce 8800's CUDA system began.[17][18]

On June 2, 2008 NVIDIA officially announced its new Tegra product line.[19] The Tegra, a system-on-a-chip (SoC), integrates an ARM CPU, GPU, northbridge and southbridge onto a single chip. Commentators[who?] opine that NVIDIA will target this product at the smartphone and mobile Internet device markets.

[edit] Graphics chipsets

- NV1: Nvidia's first product, based on quadratic surfaces

- RIVA 128 and RIVA 128ZX: DirectX 5 support, OpenGL 1 support, NVIDIA's first DirectX-compliant hardware

- RIVA TNT, RIVA TNT2: DirectX 6 support, OpenGL 1 support, Nvidia's first market-leading[citation needed] product

- NVIDIA GeForce: graphics processors for personal computers

- NVIDIA Quadro: graphics processors for professional workstations

- NVIDIA Tesla: dedicated GPGPU processors for high-performance computing systems

- NVIDIA GoForce: media processors featuring nPower technology for PDAs and mobile phones

- NVIDIA Tegra: system-on-a-chip with an ARM processor for mobile devices such as smartphones, PDAS, and MIDs

- GPUs for game consoles:

- Xbox: GeForce3-class GPU (on an Intel Pentium III/Celeron platform)

- PlayStation 3: RSX 'Reality Synthesizer'

[edit] Desktop motherboard chipsets

- nForce series

- nForce: AMD Athlon/Athlon XP/Duron K7 CPUs (System Platform Processor (SPP) and Media and Communications Processor (MCP) or GeForce2 MX-class Integrated Graphics Processor (IGP) and MCP, SoundStorm available)[20]

- nForce2: AMD Athlon/Athlon XP/Duron/Sempron K7 CPUs (SPP + MCP or GeForce4 MX-class IGP + MCP, SoundStorm available)

- nForce3: AMD Athlon 64/Athlon 64 X2/Athlon 64 FX/Opteron/Sempron K8 CPUs (unified MCP only)

- nForce4

- nForce 500

- AMD: Athlon 64/Athlon 64 X2/Athlon 64 FX/Opteron/Sempron K8 CPUs (unified MCP or SPP + MCP)

- Intel Pentium 4/Pentium 4 Extreme Edition/Pentium D/Pentium Extreme Edition/Pentium Dual-Core/Core 2 Duo/Core 2 Extreme/Celeron/Celeron D NetBurst and Core 2 CPUs (SPP + MCP only)

- nForce 600

- AMD: Athlon 64/Athlon 64 X2/Athlon 64 FX/Opteron/Sempron K8 CPUs, Quad FX-capable (unified MCP or MCP paired with GeForce 7000 series/GeForce 7100 series IGP)

- Intel: Pentium 4/Pentium 4 Extreme Edition/Pentium D/Pentium Extreme Edition/Pentium Dual-Core/Core 2 Duo/Core 2 Extreme/Core 2 Quad/Celeron/Celeron D NetBurst and Core 2 CPUs (SPP + MCP or MCP paired with GeForce 7000 series/GeForce 7100 series IGP)

- nForce 700

- nForce 900: AMD Athlon 64/Athlon 64 X2/Athlon 64 FX/Athlon X2/Athlon II X2/Athlon II X3/Athlon II X4/Opteron/Phenom X3/Phenom X4/Phenom II X2/Phenom II X3/Phenom II X4/Sempron K8 and K10 CPUs[21]

[edit] Documentation and drivers

Nvidia does not publish the documentation for its hardware, meaning that programmers cannot write appropriate and effective open-source drivers for Nvidia's products (compare Graphics hardware and FOSS). Instead, Nvidia provides its own binary GeForce graphics drivers for X.Org and a thin open-source library that interfaces with the Linux, FreeBSD or Solaris kernels and the proprietary graphics software. Nvidia also supports an obfuscated open-source driver that only supports two-dimensional hardware acceleration and ships with the X.Org distribution. NVIDIA's Linux support has promoted mutual adoption in the entertainment, scientific visualization, defense and simulation/training industries, traditionally dominated by SGI, Evans & Sutherland, and other relatively costly vendors.[citation needed]

The proprietary nature of Nvidia's drivers has generated dissatisfaction within free-software communities. Some Linux and BSD users insist on using only open-source drivers, and regard Nvidia's insistence on providing nothing more than a binary-only driver as wholly inadequate, given that competing manufacturers (like Intel) offer support and documentation for open-source developers, and that others (like ATI) release partial documentation.[22] Because of the closed nature of the drivers, Nvidia video cards do not deliver adequate features on some platforms and architectures (However this is credited[by whom?] to be due to lack of the proper kernel API needed for implementation). Support for three-dimensional graphics acceleration in Linux on the PowerPC does not exist; nor does support for Linux on the hypervisor-restricted PlayStation 3 console. While some users accept the NVIDIA-supported drivers, many users of open-source software would prefer better out-of-the-box performance if given the choice.[23] However, the performance and functionality of the binary Nvidia video card drivers surpass those of open-source alternatives[citation needed] following VESA standards.

X.Org Foundation and Freedesktop.org have started the Nouveau project, which aims to develop free-software drivers for NVIDIA graphics cards by reverse engineering Nvidia's current proprietary drivers for Linux.

[edit]

According to a survey conducted by market watch firm Jon Peddie Research,[24] Nvidia shipped an estimated 33.00 million graphics chips in the first quarter of 2010, for a market share of 31.5%. ATI and Intel shipped an estimated 25.15 million units (24.0% market share) and an estimated 45.49 million units (43.5% market share) respectively. NVIDIA's year-to-year growth was 41.9%.

According to the monthly Steam hardware survey conducted by the game developer Valve,[25] NVIDIA had 59.11% of the PC video-card gaming market share as of June 2010[update], and ATI had 32.98% of the PC video-card gaming market share.

[edit] Market history

[edit] Before DirectX

Nvidia released its first graphics card, the NV1, in 1995. Its design used quadratic surfaces, with an integrated playback-only sound card and ports for Sega Saturn gamepads. Because the Saturn also used forward-rendered quadratics, programmers ported several Saturn games to play on a PC with NV1, such as Panzer Dragoon and Virtua Fighter Remix. However, the NV1 struggled in a marketplace full of several competing proprietary standards.

Market interest in the product ended when Microsoft announced the DirectX specifications, based on polygons. Subsequently NV1 development continued internally as the NV2 project, funded by several millions of dollars of investment from Sega. Sega hoped that an integrated chip with both graphics and sound capabilities would cut the manufacturing cost of the next Sega console. However, Sega eventually realized the flaws in implementing quadratic surfaces, and the NV2 project never resulted in a finished product.[citation needed]

[edit] Transition to DirectX

Nvidia's CEO Jen-Hsun Huang realized at this point that after two failed products, something had to change for the company to survive. He hired David Kirk as Chief Scientist from software developer Crystal Dynamics. Kirk would combine NVIDIA's experience in 3D hardware with an intimate understanding of practical implementations of rendering.

As part of the corporate transformation, Nvidia sought to provide full support for DirectX, and dropped multimedia functionality in order to reduce manufacturing costs. Nvidia also adopted the goal of an internal six-month product cycle, based on the expectation that it could mitigate a failure of any one product by having a replacement moving through the development pipeline.

However, since the Sega NV2 contract remained secret, and since Nvidia had recently laid off employees, it appeared to many industry observers[who?] that Nvidia had ceased active research and development. So when Nvidia first announced the RIVA 128 in 1997, the market found the specifications hard to believe: performance superior to market-leader 3dfx Voodoo Graphics, and a fully hardware-based triangle setup engine. The RIVA 128 shipped in volume, and the combination of its low cost and high performance made it a popular choice for OEMs.

[edit] Ascendancy: RIVA TNT

Having finally developed and shipped in volume a market-leading integrated graphics chipset, Nvidia set itself the goal of doubling the number of pixel pipelines in its chip, in order to realize a substantial performance gain. The TwiN Texel (RIVA TNT) engine which Nvidia subsequently developed could either apply two textures to a single pixel, or process two pixels per clock cycle. The former case allowed for improved visual quality, the latter for doubling the maximum fillrate.

New features included a 24-bit Z-buffer with 8-bit stencil support, anisotropic filtering, and per-pixel MIP mapping. In certain respects (such as transistor count) the TNT had begun to rival Intel's Pentium processors for complexity. However, while the TNT offered an astonishing range of quality-integrated features, it failed to displace the market leader, 3dfx's Voodoo2, because the actual clock rate ended up at only 90 MHz, about 35% lower than expected.

Nvidia followed with a refresh part: a die shrink for the TNT architecture from 350 nm to 250 nm. A stock TNT2 now ran at 125 MHz, a TNT2 Ultra at 150 MHz. Though the Voodoo3 beat Nvidia to the market, 3dfx's offering proved disappointing; it did not run 1.7% faster and lacked features that were becoming standard, such as 32-bit color and textures of resolution greater than 256 x 256 pixels.

The RIVA TNT2 marked a major turning point for Nvidia. They had finally delivered a product competitive with the fastest on the market, with a superior feature set, strong 2D functionality, all integrated onto a single die with strong yields, and that ramped to impressive clock rates. Nvidia's six-month cycle refresh took the competition by surprise, giving it the initiative in rolling out new products.

[edit] Market leadership: GeForce

The northern-hemisphere autumn of 1999 saw the release of the GeForce 256 (NV10), most notably introducing on-board transformation and lighting (T&L) to consumer-level 3D hardware. Running at 120 MHz and featuring four pixel pipelines, it implemented advanced video acceleration, motion compensation, and hardware sub-picture alpha blending. The GeForce outperformed existing products - including the ATI Rage 128, 3dfx Voodoo3, Matrox G400 MAX, and RIVA TNT2 - by a wide margin.

Due to the success of its products, Nvidia won the contract to develop the graphics hardware for Microsoft's Xbox game console, which earned Nvidia a $200 million advance. However, the project drew the time of many of Nvidia's best engineers away from other projects. In the short term this did not matter, and the GeForce2 GTS shipped in the summer of 2000.

The GTS benefited from the fact that Nvidia had by this time acquired extensive manufacturing experience with its highly integrated cores, and as a result it succeeded in optimizing the core for higher clock-rates. The volume of chips produced by Nvidia also allowed the segregation of parts: Nvidia could pick out the highest-quality cores from the same batch as regular parts for its premium range. As a result, the GTS shipped at 200 MHz. The pixel fillrate of the GeForce256 nearly doubled, and texel fillrate nearly quadrupled because multi-texturing was added to each pixel pipeline. New features included S3TC compression, FSAA, and improved MPEG-2 motion compensation.

In 2000 Nvidia shipped the GeForce2 MX, intended for the budget and OEM market. It had two fewer pixel pipelines and ran at 165 MHz (later at 250 MHz). Offering strong performance at a mid-range price, the GeForce2 MX became one of the most successful graphics chipsets. NVIDIA also shipped a mobile derivative called the GeForce2 Go at the end of 2000.

Nvidia's success proved too much for 3dfx to recover its past market share. The long-delayed Voodoo 5, the successor to the Voodoo3, did not compare favorably with the GeForce2 in either price or performance, and failed to generate the sales needed to keep the company afloat. With 3dfx on the verge of bankruptcy near the end of 2000, Nvidia purchased most of 3dfx's intellectual property (in dispute at the time)[citation needed]. NVIDIA acquired anti-aliasing expertise and about 100 engineers, but not the company itself, which filed for bankruptcy in 2002.

Nvidia developed the GeForce3, which pioneered DirectX 8 vertex and pixel shaders, and eventually refined it with the GeForce4 Ti line. Nvidia announced the GeForce4 Ti, MX, and Go in January 2002, one of the largest releases in Nvidia's history. The chips in the Ti and Go series differed only in chip and memory clock rates. The MX series lacked the pixel and vertex shader functionalities; it derived from GeForce2 level hardware and assumed the GeForce2 MX's position in the value segment.

[edit] Stumbles with the FX series

At this point Nvidia dominated the GPU market. However, ATI Technologies remained competitive due to its new Radeon product, which had performance comparable to the GeForce2 GTS. Though ATI's answer to the GeForce3, the Radeon 8500, came later to market and initially suffered from issues with drivers, the 8500 proved a superior competitor due to its lower price.[citation needed] NVIDIA countered ATI's offering with the GeForce4 Ti line. ATI concentrated efforts on its next-generation Radeon 9700 rather than on directly challenging the GeForce4 Ti.

During the development of the next-generation GeForce FX chips, many Nvidia engineers focused on the Xbox contract. Nvidia also had a contractual obligation to develop newer and more hack-resistant NV2A chips, and this requirement left even fewer engineers to work on the FX project. Since the Xbox contract did not anticipate or encompass falling manufacturing costs, Microsoft sought to re-negotiate the terms of the contract, and relations between NVIDIA and Microsoft deteriorated as a result. The two companies later settled the dispute through arbitration without releasing the terms of the settlement to the public.

Following their dispute, Microsoft did not consult Nvidia during the development of the DirectX 9 specification, allowing ATI to establish much of the specification themselves. During this time, ATI limited rendering color support to 24-bit floating point,[citation needed] and emphasized shader performance. Microsoft also built the shader compiler using the Radeon 9700 as the base card. In contrast, Nvidia's cards offered 16- and 32-bit floating-point modes, offering either lower visual quality (as compared to the competition), or slower performance. The 32-bit support made them much more expensive to manufacture, requiring a higher transistor count. Shader performance often remained at half or less of the speed provided by ATI's competing products. Having made its reputation by designing easy-to-manufacture DirectX-compatible parts, Nvidia had misjudged Microsoft's next standard and paid a heavy price: as more and more games started to rely on DirectX 9 features, the poor shader-performance of the GeForce FX series became more obvious. With the exception of the FX 5700 series (a late revision), the FX series did not compete well against ATI cards.

Nvidia released an "FX only" demo called "Dawn", but a hacked wrapper enabled it to run on a Radeon 9700, where it ran faster despite translation overhead. Nvidia began to use application detection to optimize its drivers. Hardware review sites published articles showing that Nvidia's driver auto-detected benchmarks and that it produced artificially inflated scores that did not relate to real-world performance.[citation needed] Often tips from ATI's driver development team lay behind these articles[citation needed]. While NVIDIA did partially close the performance gap with new instruction-reordering capabilities introduced in later drivers, shader performance remained weak and over-sensitive to hardware-specific code compilation. Nvidia worked with Microsoft to release an updated DirectX compiler that generated code optimized for the GeForce FX.

Furthermore, GeForce FX devices also ran hot, because they drew as much as double the amount of power as equivalent parts from ATI. The GeForce FX 5800 Ultra became notorious for its fan noise, and acquired the nicknames "dustbuster" and "leafblower." Nvidia jokingly acknowledged these accusations with a video in which the marketing team compares the cards to a Harley-Davidson motorcycle.[26] Although the quieter 5900 replaced the 5800 without fanfare, the FX chips still needed large and expensive fans, placing Nvidia's partners at a manufacturing cost disadvantage compared to ATI.

Seemingly as a culmination of these events at the corporate level and the subsequent weaknesses of the FX series, Nvidia ceded its market leadership position to ATI.

[edit] GeForce 6 series

With the GeForce 6 series Nvidia moved beyond the DX9 performance problems that had plagued the previous generation. The GeForce 6 series not only performed competitively against other Direct 3D shaders, but also supported DirectX Shader Model 3.0, while ATI's competing X800 series chips only supported the previous 2.0 specification. This proved an insignificant advantage, mainly because games of that period did not employ extensions for Shader Model 3.0. However, it demonstrated Nvidia's desire and ability to design and follow through with the newest features and deliver them in a specific timeframe. What became more apparent during this time was that the products of the two firms, ATI and Nvidia, offered equivalent performance. The two firms traded the performance lead in specific titles and specific criteria (resolution, image quality, anisotropic filtering/anti-aliasing), but the differences were becoming more abstract. As a result, price/performance ratio became the reigning concern in comparisons of the two. The mid-range offerings of the two firms demonstrated consumer appetite for affordable, high-performance graphics cards. This price segment came to determine much of each firm's profitability. The GeForce 6 series emerged at a very interesting period: The game Doom 3 had just been released, and ATI's Radeon 9700 was found to struggle with OpenGL performance in the game. In 2004, the GeForce 6800 performed excellently, while the GeForce 6600GT remained as important to Nvidia as the GeForce2 MX a few years previously.[clarification needed] The GeForce 6600GT enabled users of the card to play Doom 3 at very high resolutions and graphical settings, which had been thought to be highly unlikely considering its selling price. The GeForce 6 series also introduced SLI, which resembles technology that 3dfx had employed with the Voodoo2. A combination of SLI and other hardware performance gains returned Nvidia to market leadership. The GeForce 6 series represents the first generation of Nvidia PCI-E cards.

The GeForce 7 series represented a heavily beefed-up extension of the reliable 6 series. The introduction of the PCI Express bus standard allowed NVIDIA to release SLI (Scalable Link Interface), a solution that employs two similar cards to share the workload in rendering. While these solutions do not equate to double the performance, and require more electricity (two cards vis-à-vis one), they can make a huge difference as higher resolutions and settings are enabled and, more importantly, offer more upgrade flexibility. ATI responded with the X1000 series, and with a dual-rendering solution called "ATI CrossFire". Sony selected Nvidia to develop the "RSX" chip (a modified version of the 7800 GPU) used in the PlayStation 3.

[edit] Unified Architecture with the 8-series and later

| This article is outdated. Please update this article to reflect recent events or newly available information. Please see the talk page for more information. (November 2010) |

Nvidia released a GeForce 8 series chip towards the end of 2006, making the 8 series the first to support Microsoft's next-generation DirectX 10 specification. The 8 series GPUs also featured the revolutionary Unified Shader Architecture, and Nvidia leveraged this to provide better support for General Purpose Computing on GPU (GPGPU). A new product line of "compute only" devices called Nvidia Tesla emerged from the G80 architecture, and subsequently Nvidia also became the market leader[citation needed] of this new field by introducing the world's first C programming language API for GPGPU, CUDA.

In June 2008, Nvidia released its new flagship GPUs: the GTX 280 and GTX 260. The cards used the same basic Unified Architecture deployed in the previous 8 and 9 series cards, but with an upgrade in power. Both of the cards use the GT200 GPU as a basis for their design. This GPU contains 1.4 billion transistors on a 65 nm fabrication process. The GTX 280 has 240 shaders (stream processors) and the GTX 260 has 192 shaders (stream processors). The GTX 280 has 1 GB of GDDR3 VRAM and uses a 512-bit memory bus. The GTX 260 has 896 MB of GDDR3 VRAM on a 448-bit memory bus (revised in September 2008 to include 216 shaders). The GTX 280 allegedly provides approximately 933 GFLOPS of floating point power.[citation needed]

Nvidia launched the Geforce 400 series on March 26, 2010, presenting the GTX 470 and GTX 480 to the public at PAX East 2010.[27] These flagship products were power hungry and ran hot. They provided comparable performance to price ratio to competing ATI products, but the overall efficiency was poor. Since then, Nvidia has released the GTX 465, the GTX 460, and the GTS 450. The GTX 465 contained the GF100, the same GPU as in the flagship models, with some functional units disabled. This allowed the card to be priced slightly lower. The first real improvements came with the GTX 460 and GTS 450. They contained a GF104 chip, the more efficient and cost-effective derivative of GF100. Presently, Nvidia has saturated all market price-points with current hardware.

[edit] GPU controversies for Laptops/Notebooks

In July 2008, Nvidia noted increased rates of failure in certain mobile video adapters.[28] In response to this issue, Dell and HP released BIOS updates for all affected notebook computers which turn on the cooling fan at lower temperatures than previously configured in an effort to keep the defective video adapter from reaching higher temperatures. Leigh Stark of APC Magazine has suggested that this may lead to the premature failure of the cooling fan.[29] This resolution/workaround may possibly only delay component failure past warranty expiration.

But at the end of August 2008, Nvidia reportedly issued a product change notification announcing plans to update the bump material of GeForce 8 and 9 series chips "to increase supply and enhance package robustness."[30] In response to the possibility of defects in some mobile video adapters from NVIDIA, some[which?] manufacturers of notebooks have allegedly turned to ATI to provide graphics options on their new Montevina notebook computers.[31]

On August 18, 2008, according to the direct2dell.com blog, Dell began to offer a 12-month limited warranty "enhancement" specific to this issue on affected notebook computers worldwide.[32]

On September 8, 2008, Nvidia made a deal with large OEMs, including Dell and HP, that Nvidia would pay $200 per affected notebook to manufacturers as compensation for the defects.[33]

On October 9, 2008, Apple Inc. announced on a support page that some MacBook Pro notebook computers had exhibited faulty Nvidia GeForce 8600M GT graphics adapters.[34] The manufacture of affected computers took place between approximately May 2007 and September 2008. Apple also stated that it would repair affected MacBook Pros within three years of the original purchase date free of charge and also offered refunds to customers who had paid for repairs related to this issue.

Source : Wikipedia